|

|

本帖最后由 Reader86 于 2022-12-24 01:52 AM 编辑

The limits of knowledge

Gödel, Turing, and the science of what we can and cannot know

In the seventeenth century, German mathematician Gottfried Leibnitz proposed a machine that could read any mathematical statement as input and determine whether it is true or false, based on the axioms of Mathematics. But is every statement decidable like that? Or are the limits to what we can know? This question has become known as the Entscheidungsproblem (decision problem).

Two centuries later, another German mathematician, David Hilbert, declared optimistically that the answer to the Entscheidungsproblem had to be, yes, we can and will know the answer to any mathematical question. In an address in 1930 in German town Königsberg he famously said,

Wir müssen wissen — wir werden wissen. (‘We must know — we will know.’)

But will we?

The limits of Mathematics

Hilbert’s optimism turned out to be short-lived. In the same year, Austrian Mathematician Kurt Gödel demonstrated that there are limits to our knowledge in Mathematics by proving his famous incompleteness theorem.

Here’s a simplified way to understand Gödel’s theorem. Consider the following statement.

Statement S: This statement is not provable.

Now, suppose that within the context of Mathematics we could prove S to be true. But then, the statement S itself would be false, leading to an inconsistency. Okay, then let’s assume the opposite, that we cannot prove S within the context of Mathematics. But that would mean that S itself is true, and that Mathematics contains at least one statement that is true but cannot be shown to be true. Hence, Mathematics must be either inconsistent or incomplete. If we assume it to be consistent (statements cannot be true and false at the same time), this only leaves the conclusion that Mathematics is incomplete, i.e., there are true statements that simply cannot be shown to be true.

Gödel’s mathematical proof of the incompleteness theorem, which is much more complicated than I outlined here, fundamentally changed the view advertised by Hilbert that complete knowledge is feasible (“wir werden wissen”). In other words, if we assume Mathematics to be consistent, we are bound to discover true statements that are not provable.

For example, consider the Goldbach conjecture, according to which every even number is the sum of two primes:

6 = 3 + 3

8 = 3 + 5

10 = 3 + 7

12 = 7 + 5, and so on.

No one has yet found a counter-example, and there might be none, if the conjecture is true. Because of Gödel we know that there are true statements out there that have no proof, but unfortunately, there is no way to identify these statements. The Goldbach conjecture might be one of them, and if it is, then attempt of finding a proof would be a waste of time.

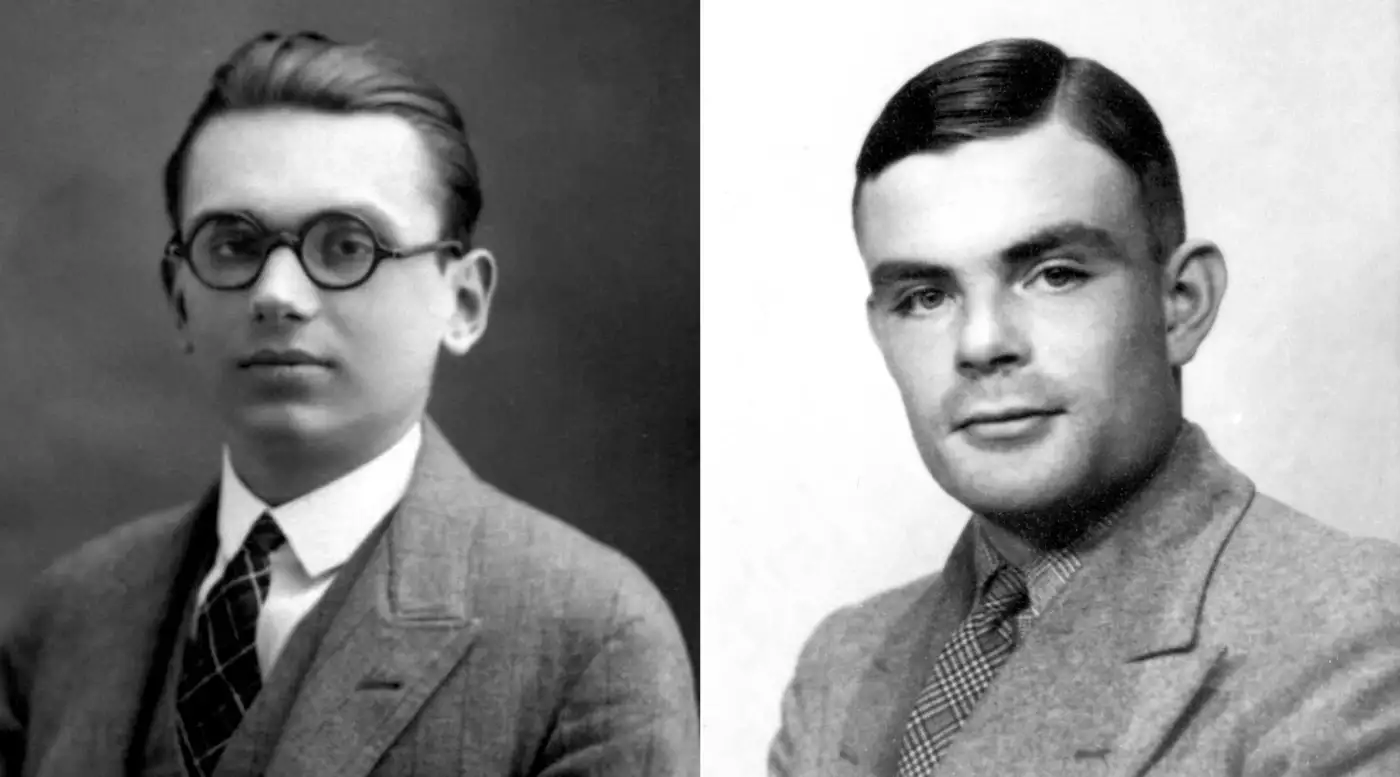

Kurt Gödel (left) and Alan Turing (right) (image source: Cantor’s Paradise)

The limits of computation

Alan Turing was a graduate student at Cambridge University when he first learned about Gödel’s incompleteness theorem. During that time, Turing was working on formulating the mathematical design of machines that could process any input and compute a result, similar to what Leibnitz had envisioned centuries earlier. These conceptualized machines are today known as Turing machines, and turned out to be the blueprint for the modern digital computer. In simple terms, a Turing machine can be likened to a modern computer program.

Turing was working on the so-called halting problem, which can be posed as follows:

Can there be a program that can determine whether another program will halt (finish execution) or not (loop forever)?

Turing proved that the answer to the halting problem is “No”, such a program cannot exist. Similar to Gödel’s work, his proof is a ‘proof by contradiction’. Assume that there exists a program halts() that determines whether a given program will halt or not. But then we can also construct the following program:

def g():

if halts(g):

loop_forever()

return

See what’s happening here? If g holds, g doesn’t hold, and if g doesn’t hold, g holds. Either way, we have a contradiction. Hence, the program halts() cannot exist.

While Gödel proved that Mathematics is incomplete, Turing proved that Computer Science is in some sense ‘incomplete’, too. Certain programs simply cannot exist. This is not just a theoretical curiosity: the halting problem has many practical implications in Computer Science today. For instance, if we want a compiler to find the fastest possible machine code for a given program, we are actually trying to solve the halting problem — and we already know that the problem is not solvable.

A complicated protein structure — predicting how proteins fold is an NP-problem. (image source: Nature)

Practical limits of knowledge: the P vs NP problem

By showing that there are problems that are fundamentally not solvable, Gödel and Turing demonstrated that there are theoretical limits to what we can ever know. But in addition, there are other problems that we could solve in theory, but not in practice because it simply takes too long to compute the solution. This is where we get into the distinction between P-problems and NP-problems.

P-problems are problems that that can be solved in ‘reasonable time’, where reasonable in this context means ‘polynomial’ (hence the P). The computational complexity of finding a solution to these problems grows with some power of the size of the input to the problem. Think multiplication or sorting problems.

NP-problems on the other hand are problems that cannot be solved in reasonable time. NP stands for non-deterministic polynomial, meaning that a solution can be confirmed, but not found, with polynomial computational complexity. The complexity of finding a solution for NP-problems is exponential instead of polynomial, which makes an enormous practical difference. Examples for NP-problems are optimal scheduling, predicting how proteins fold, encrypting messages, solving Sudoku puzzles, optimal packing (a.k.a. the knapsack problem), or optimal routing (a.k.a. the traveling salesman problem). Some problems, such as finding the discrete Fourier transform of a function, start out in NP, but ultimately end up in P because of the development of new, clever algorithms that simplify the solution.

One of the biggest unanswered questions in Computer Science today is the P vs NP question: Is P equal to NP, or not? In other words, for all problems for which we can confirm a solution in reasonable time, can we also find a solution in reasonable time?

The P vs NP question is so important that it is included in the list of the ‘Millenium prize problems’, and you’ll win one Million dollars if you find the answer. It is hard to overstate the significance of the problem: a world in which P=NP would be fundamentally different from a world in which P≠NP. If P=NP, then we could say with certainty that there is a much faster way to solve Sudoku puzzles, or to predict how proteins fold, we just have not found that method yet. Needless to say, knowing how proteins fold could have all sorts of real-world implications, like understanding Alzheimer’s disease or curing cancer.

Most scientists today believe that P does not equal NP, but will we ever know for sure? The P vs NP question itself might be similar to Hilbert’s Entscheidungsproblem or Turing’s Halting problem: there might simply be no answer to the question.

https://towardsdatascience.com/t ... wledge-b59be67fd50a |

|